Where to create the robots.txt file

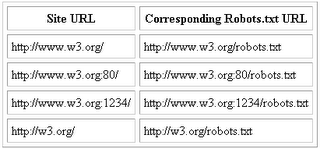

The Robot will simply look for a "/robots.txt" URL on your site, where a site is defined as a HTTP server running on a particular host and port number. For example:

Note that there can only be a single "/robots.txt" on a site. Specifically, you should not put "robots.txt" files in user directories, because a robot will never look at them. If you want your users to be able to create their own "robots.txt", you will need to merge them all into a single "/robots.txt". If you don't want to do this your users might want to use the Robots META Tag instead.

Also, remeber that URL's are case sensitive, and "/robots.txt" must be all lower-case.

So, you need to provide the "/robots.txt" in the top-level of your URL space. How to do this depends on your particular server software and configuration.

For most servers it means creating a file in your top-level server directory. On a UNIX machine this might be /usr/local/etc/httpd/htdocs/robots.txt

What to put into the robots.txt file ?

The "/robots.txt" file usually contains a record looking like this:

User-agent: *

Disallow: /cgi-bin/

Disallow: /tmp/

Disallow: /~joe/

In this example, three directories are excluded.

Note that you need a separate "Disallow" line for every URL prefix you want to exclude -- you cannot say "Disallow: /cgi-bin/ /tmp/". Also, you may not have blank lines in a record, as they are used to delimit multiple records.

Note also that regular expression are not supported in either the User-agent or Disallow lines. The '*' in the User-agent field is a special value meaning "any robot". Specifically, you cannot have lines like "Disallow: /tmp/*" or "Disallow: *.gif".

What you want to exclude depends on your server. Everything not explicitly disallowed is considered fair game to retrieve. Here follow some examples:

To exclude all robots from the entire server

User-agent: *

Disallow: /

To allow all robots complete access

User-agent: *

Disallow:

Or create an empty "/robots.txt" file.

To exclude all robots from part of the server

User-agent: *

Disallow: /cgi-bin/

Disallow: /tmp/

Disallow: /private/

To exclude a single robot

User-agent: BadBot

Disallow: /

To allow a single robot

User-agent: WebCrawler

Disallow:

User-agent: *

Disallow: /

To exclude all files except one

This is currently a bit awkward, as there is no "Allow" field. The easy way is to put all files to be disallowed into a separate directory, say "docs", and leave the one file in the level above this directory:

User-agent: *

Disallow: /~joe/docs/

Alternatively you can explicitly disallow all disallowed pages:

User-agent: *

Disallow: /~joe/private.html

Disallow: /~joe/foo.html

Disallow: /~joe/bar.html

0 Comments:

Post a Comment

<< Home